![]()

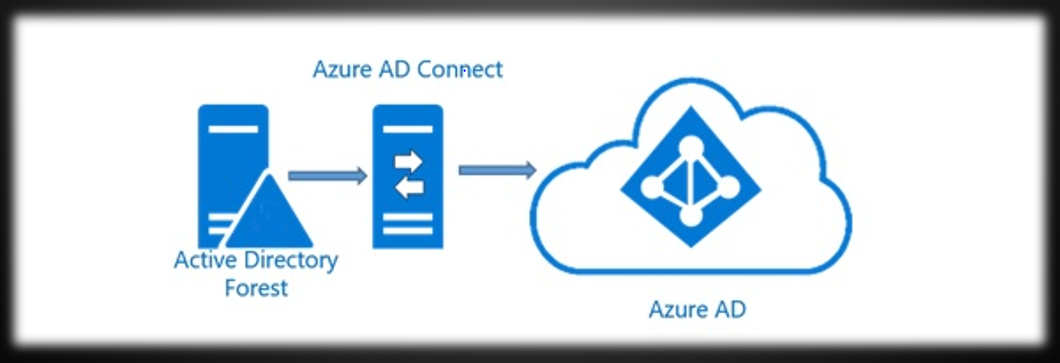

At my last my job, I was involved in an Azure tenant migration that was initiated by a company merger. As a part of this migration, I was given the task of migrating some servers to the new tenant. The other resources were rebuilt in the new environment, however these particular machines had complex configurations that made rebuilding them very time consuming. In this article, I will document the steps I took to move the VMs to the new tenant using Azure Storage Explorer.